Once you identify that your model suffers from this issue, you can check our Handling Dynamic Shapes guide to look for possible resolutions to graph re-compilation, eliminating potential latency.

The Habana bridge provides the functionality to compile a SynapseAI(R) graph and launch the resulting recipe in an asynchronous method. The recipes are cached by the Habana bridge to avoid recompilation of the same graph. This caching is done at an eager op level as well as at a JIT graph level. During training, the graph compilation is only required for the initial iteration; thereafter the same compiled recipe is re-executed every iteration (with new inputs) unless there is a change in the ops being executed.

In some cases, SynapseAI will have to re-compile the graph. This is mostly due to dynamic shaped input such as varying sentence lengths in language models or differing image resolutions in image model. Frequent graph recompilations can lead to a longer time-to-train.

Following is the step-by-step process for detecting frequent graph re-compilations on the SynapseAI platform.

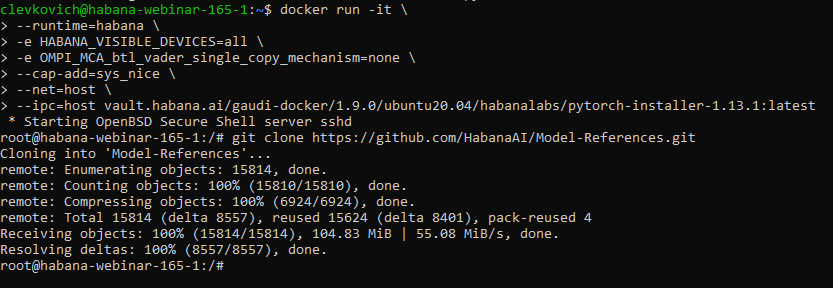

Start docker

Make sure to use the latest PyTorch container from here.

docker pull vault.habana.ai/gaudi-docker/1.9.0/ubuntu20.04/habanalabs/pytorch-installer-1.13.1:latest

docker run -it \

--runtime=habana \

-e HABANA_VISIBLE_DEVICES=all \

-e OMPI_MCA_btl_vader_single_copy_mechanism=none \

--cap-add=sys_nice \

--net=host \

--ipc=host vault.habana.ai/gaudi-docker/1.9.0/ubuntu20.04/habanalabs/pytorch-installer-

1.13.1:latestPrepare the model

We will use an MNIST example model for this tutorial.

Clone the Model References repository inside the container that you have just started:

git clone https://github.com/HabanaAI/Model-References.git

Move to the subdirectory containing the hello_world example:

cd Model-References/PyTorch/examples/computer_vision/hello_world/Update PYTHONPATH to include Model-References repository and set PYTHON to python executable:

export PYTHONPATH=$PYTHONPATH:Model-References

export PYTHON=/usr/bin/python3.8Training on a Single Gaudi (HPU) Device

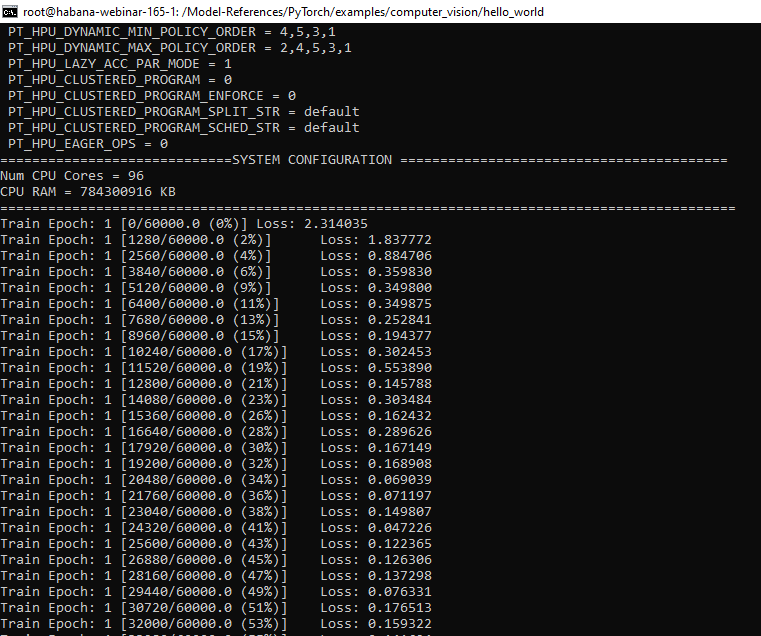

Run training on single HPU in BF16 with hmp (Habana mixed precision) enabled:

$PYTHON mnist.py --batch-size=128 --epochs=1 --lr=1.0 \

--gamma=0.7 --hpu --hmp \

--hmp-bf16=ops_bf16_mnist.txt \

--hmp-fp32=ops_fp32_mnist.txt \

--use_lazy_mode

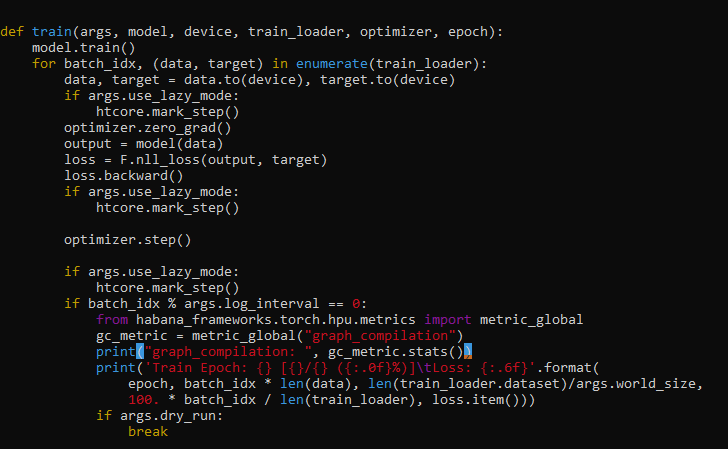

Detect re-compilations

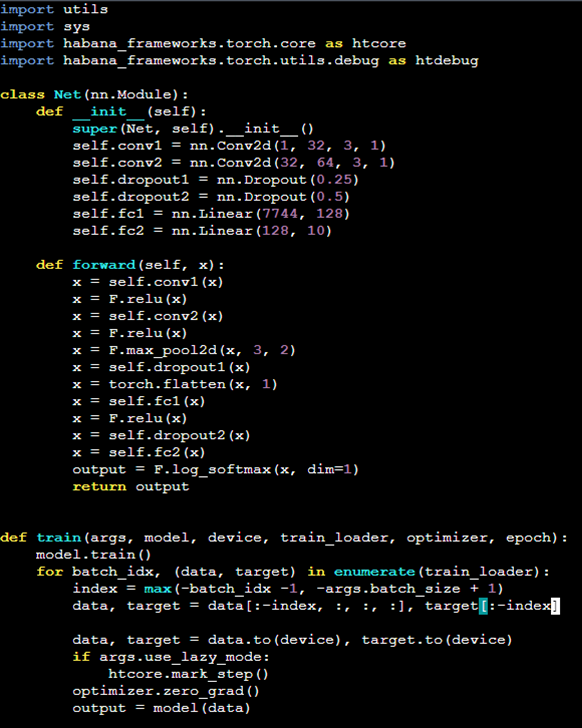

We will add the code to model artificial dynamicity by constantly changing the batch size. We will also use the Metric APIs to detect the frequent recompilations.

Edit the mnist.py file, and in line 61 add the line

from habana_frameworks.torch.hpu.metrics import metric_global

gc_metric = metric_global("graph_compilation")

print("graph_compilation: ", gc_metric.stats())

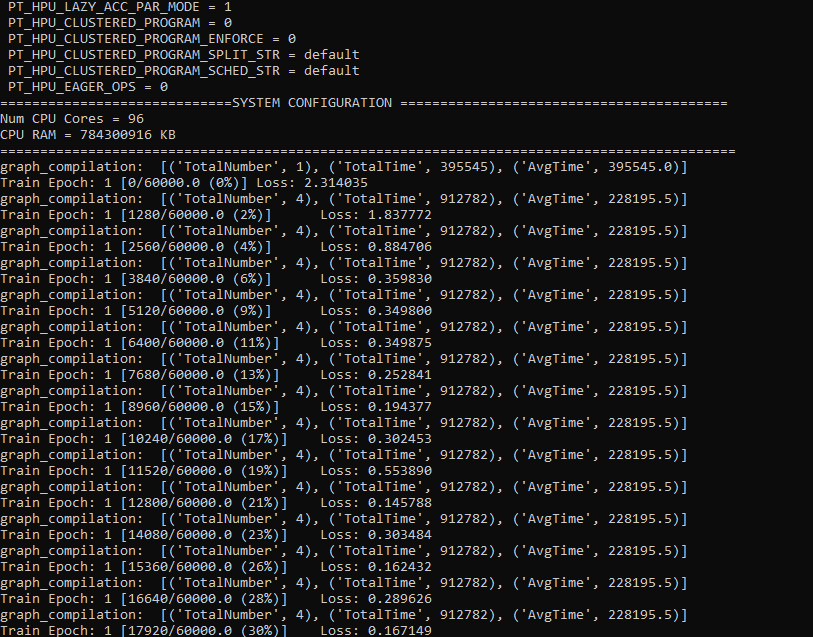

Now run the training code again. You will notice some graph compilations occur.

$PYTHON mnist.py --batch-size=128 --epochs=1 --lr=1.0 \

--gamma=0.7 --hpu --hmp \

--hmp-bf16=ops_bf16_mnist.txt \

--hmp-fp32=ops_fp32_mnist.txt \

--use_lazy_mode

Now let’s add some artificial dynamicity by adding the code below at line 46

index = max(-batch_idx -1, -args.batch_size + 1)

data, target = data[:-index, :, :, :], target[:-index]

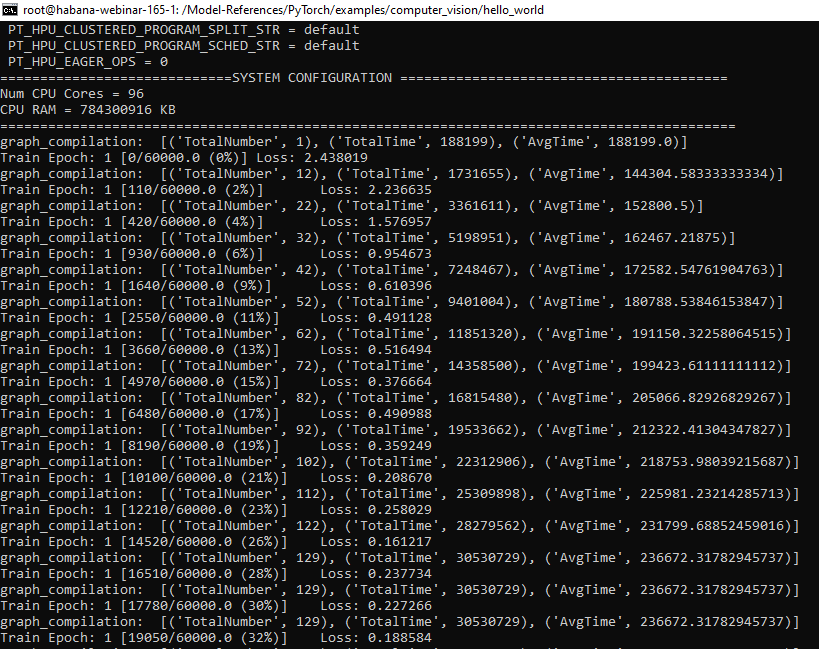

While running the training code again, you will see that graph compilation is much more frequent.

$PYTHON mnist.py --batch-size=128 --epochs=1 --lr=1.0 \

--gamma=0.7 --hpu --hmp \

--hmp-bf16=ops_bf16_mnist.txt \

--hmp-fp32=ops_fp32_mnist.txt \

--use_lazy_mode

As long as we keep changing the batch size, we have more re-compilations. You will also probably notice that training was slower.

What’s next?

Feel free to use the same technique to check how frequent are graph recompilations in your code. If needed use our Handling Dynamic Shapes to look for possible resolutions.