What is fine tuning?

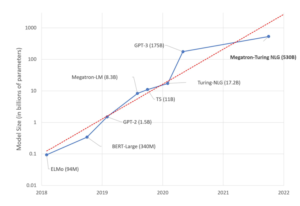

Training models from scratch can be expensive, especially with today’s large-scale models. Depending on the model size and scale, the estimated cost for the hardware needed to train such models can range from thousands of dollars all the way to millions of dollars. Fine-tuning is a process to take a neural network model that has already been trained (usually called a pre-trained model) and update it to create a model that performs a specific task. Assuming that the original task is similar to the new task, using a pre-trained model allows us to take full advantage of the feature extraction that occurs in the top layers of the network without having to develop and train a model from scratch.

In this blog, we will be focusing on transformers. Pre-trained transformers can be quickly fine-tuned for numerous downstream tasks and perform well on these tasks. Let’s consider a pre-trained transformer model that already understands language. Fine tuning then focuses on training the model to perform question answering, language generation, named-entity recognition, sentiment analysis and other such tasks.

Given the cost and complexity of training large models, making use of pretrained models is an appealing approach. And in fact, there are many publicly available pretrained models. Here we will focus on the most popular open-source transformer library, Hugging Face. The Hugging Face hub contains a wide variety of pretrained transformer models, and the Hugging Face transformer library makes it easy to use these pretrained models for finetuning.

Can models pre-trained on GPUs be used for fine tuning on Gaudi or vice versa?

While the pretraining process was done on a specific architecture, the saved pretrained model can be used on different ones. For example, you can pretrain a model using Habana Gaudi AI Processor, save it, and later fine tune the model using CPU. Or you can load a publicly available pretrained model, originally pretrained on GPU, and continue training or fine tuning it on Habana Gaudi AI processor.

Getting started with Gaudi and Hugging Face

Set up AWS EC2 DL1 instance with the latest Habana® SynapseAI® Software suite. You can find the full instructions here.

Start docker

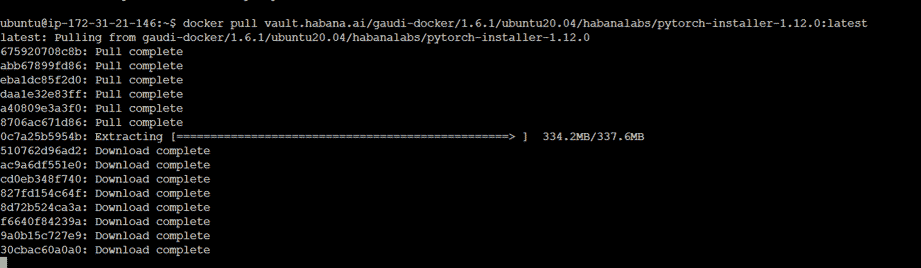

Make sure to use the latest PyTorch container from here.

docker pull vault.habana.ai/gaudi-docker/1.6.1/ubuntu20.04/habanalabs/pytorch-installer-1.12.0:latest

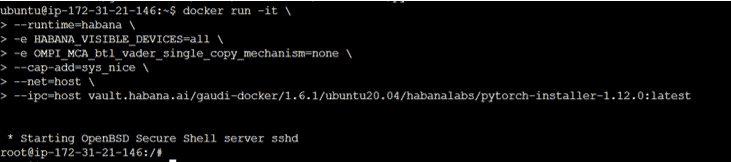

docker run -it \

--runtime=habana \

-e HABANA_VISIBLE_DEVICES=all \

-e OMPI_MCA_btl_vader_single_copy_mechanism=none \

--cap-add=sys_nice \

--net=host \

--ipc=host vault.habana.ai/gaudi-docker/1.6.1/ubuntu20.04/habanalabs/pytorch-installer-1.12.0:latest

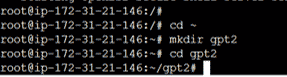

Create the model folder

cd ~

mkdir gpt2

cd gpt2

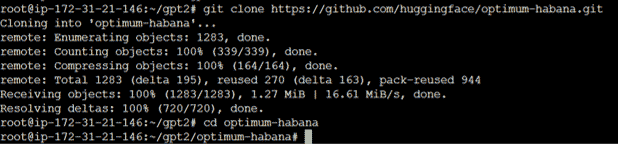

Clone Optimum Habana from Hugging Face and setup the requirements

git clone https://github.com/huggingface/optimum-habana.git

cd optimum-habana

python3 setup.py install

cd examples/language-modeling

pip install -r requirements.txt

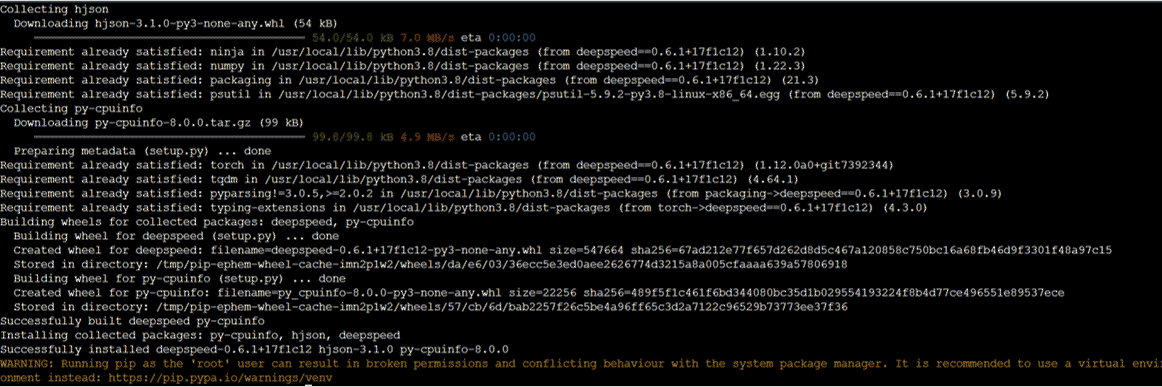

Install Habana DeepSpeed

pip install git+https://github.com/HabanaAI/DeepSpeed.git

Fine tune the model

Switch back to the gpt2 folder

cd ~/gpt2Switch back to the gpt2 folder

And create a new file main.py with the content below

from optimum.habana.distributed import DistributedRunner

training_args = {

"output_dir": "/tmp/clm_gpt2_xl",

"dataset_name": "wikitext",

"dataset_config_name": "wikitext-2-raw-v1",

"num_train_epochs": 1,

"per_device_train_batch_size": 4,

"per_device_eval_batch_size": 4,

"gradient_checkpointing": True,

"do_train": True,

"do_eval": True,

"overwrite_output_dir": True,

}

model_name = "gpt2-xl"

training_args["model_name_or_path"] = model_name

training_args["use_habana"] = True # Whether to use HPUs or not

training_args["use_lazy_mode"] = True # Whether to use lazy or eager mode

training_args["gaudi_config_name"] = "Habana/gpt2" # Gaudi configuration to use

training_args["deepspeed"] = "optimum-habana/tests/configs/deepspeed_zero_2.json"

# Build the command to execute

training_args_command_line = " ".join(f"--{key} {value}" for key, value in training_args.items())

command = f"optimum-habana/examples/language-modeling/run_clm.py {training_args_command_line}"

# Instantiate a distributed runner

distributed_runner = DistributedRunner(

command_list=[command], # The command(s) to execute

world_size=8, # The number of HPUs

use_deepspeed=True, # Enable DeepSpeed

)

# Launch training

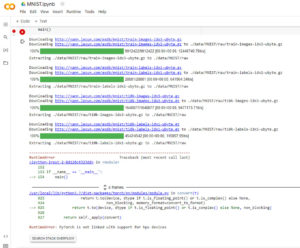

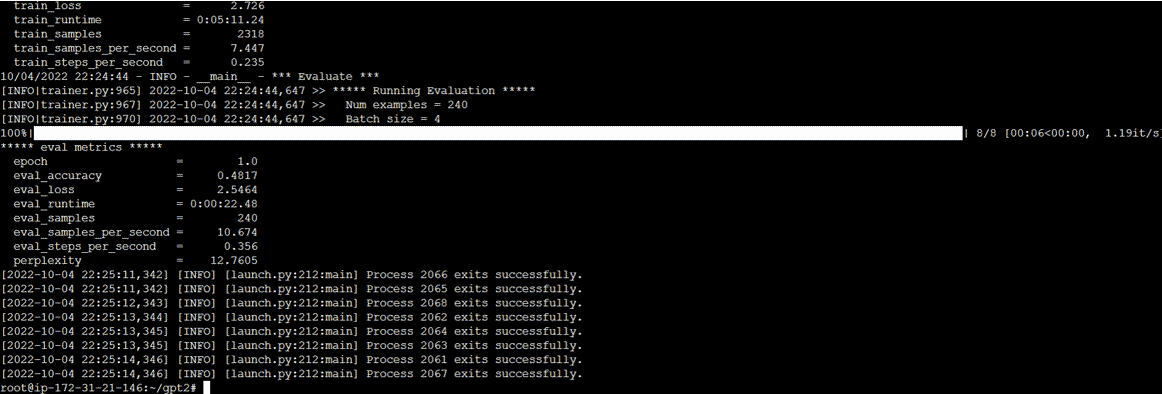

ret_code = distributed_runner.run()The code will fine tune the gpt2 pretrained model using the wiki text dataset. It will run in distributed mode if multiple Gaudis are available. Note that for fine tuning, the argument “model_name_or_path” is used and it loads the model checkpoint for weights initialization.

Now run the code using the command

python3.8 main.pyThe text fine-tuning results appear as below:

Use the new fine-tuned model for Text prediction

Create a file test.py with the content below

# The sequence to complete

prompt_text = "Contrary to the common belief, Chocolate is actually good for you because "

import torch

from transformers import GPT2LMHeadModel, GPT2Tokenizer

import habana_frameworks.torch.core as htcore

path_to_model = "/tmp/clm_gpt2_xl" # the folder where everything related to our run was saved

device = torch.device("hpu")

# Load the tokenizer and the model

tokenizer = GPT2Tokenizer.from_pretrained(path_to_model)

model = GPT2LMHeadModel.from_pretrained(path_to_model)

model.to(device)

# Encode the prompt

encoded_prompt = tokenizer.encode(prompt_text, add_special_tokens=False, return_tensors="pt")

encoded_prompt = encoded_prompt.to(device)

# Generate the following of the prompt

output_sequences = model.generate(

input_ids=encoded_prompt,

max_length=16 + len(encoded_prompt[0]),

do_sample=True,

num_return_sequences=1,

)

# Remove the batch dimension when returning multiple sequences

if len(output_sequences.shape) > 2:

output_sequences.squeeze_()

generated_sequences = []

for generated_sequence_idx, generated_sequence in enumerate(output_sequences):

print(f"=== GENERATED SEQUENCE {generated_sequence_idx + 1} ===")

generated_sequence = generated_sequence.tolist()

# Decode text

text = tokenizer.decode(generated_sequence, clean_up_tokenization_spaces=True)

# Remove all text after the stop token

text = text[: text.find(".")]

# Add the prompt at the beginning of the sequence. Remove the excess text that was used for pre-processing

total_sequence = (

prompt_text + text[len(tokenizer.decode(encoded_prompt[0], clean_up_tokenization_spaces=True)) :]

)

generated_sequences.append(total_sequence)

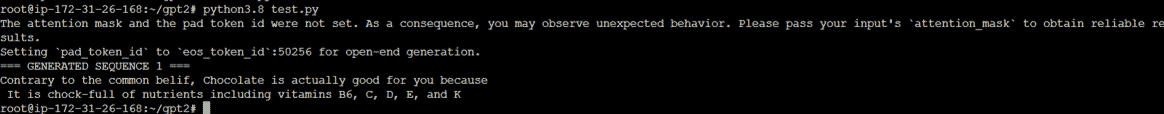

print(total_sequence)Now run the code using the command

python3.8 test.pyThe text prediction result appears as below:

What’s next?

You can try different prompts and different configurations for running the model. You can find more information on Hugging Face Habana-optimum GitHub page, and Habana Developer site.