DeepSpeed [2] is a popular deep learning software library which facilitates memory-efficient training of large language models. DeepSpeed includes ZeRO (Zero Redundancy Optimizer), a memory-efficient approach for distributed training [5]. ZeRO has multiple stages of memory efficient optimizations, and Habana’s SynapseAI® software currently supports ZeRO-1 and ZeRO-2. In this article, we will talk about what ZeRO is and how it is useful for training LLMs. We will provide a brief technical overview of ZeRO, covering ZeRO-1 and ZeRO-2 stages of memory optimization. More details on DeepSpeed Support on Habana SynapseAI Software can be found at Habana DeepSpeed User Guide. Now, let us dive into why we need memory efficient training for LLMs and how ZeRO can help achieve this.

Emergence of Large Language Models

Large Language Models (LLMs) are becoming super large, with model sizes growing by 10x in only a few years as shown in Figure 1 [7]. Increase in model sizes offers considerable gains in model accuracy. Large LLMs such as GPT-2 (1.5B), Megatron-LM (8.3B), T5 (11B), Turing-NLG (17B), Chinchilla (70B), GPT-3 (175B), OPT-175B, BLOOM (176B), etc. have been released to excel in various tasks such as natural language understanding, question answering, summarization, translation, and natural language generation. As the size of LLMs keeps growing, how can we efficiently train such large models? Of course, the answer is “parallelization”

Parallelizing Model Training

Data Parallelism (DP) and Model Parallelism are two known techniques in distributed training. In Data Parallel training, each data parallel process/worker has a copy of the complete model, but the dataset is split into Nd parts where Nd is the number of data parallel processes. Say, if you have Nd devices, you split a mini-batch into Nd parts, one for each of them. Then you feed the respective batch through the model on each device and obtain gradients for each split of the mini-batch. You then collect all the gradients and update the parameters with the overall average. Once we get all the gradients (needs synchronization here), we calculate the average of the gradients, and use the average of the gradients to update the model/parameters. Then we move on to the next iteration of model training. The degree of data parallelism is the number of splits and is denoted by Nd.

With model parallelism, instead of partitioning the data, we divide the model into Nm parts, typically where Nm is the number of devices. Splitting of model can happen either vertically or horizontally. Intra-layer model parallelism is vertical splitting, where each model layer can split across multiple devices. Inter-layer model parallelism (also known as pipeline parallelism) is horizontal splitting, where model is split at one or more model layer boundaries. Model Parallelism requires considerable communication overhead. Hence it is effective inside a single node but has scaling issues across nodes to communication overheads. Also, it is complex to implement.

Data parallel training is simpler to implement, has good compute/communication efficiency, but it does not reduce the memory footprint across a single device, since the full copy of the model is kept on each device. As models keep growing, it may no longer be possible to hold the full model in a single device’s memory. Typically models with more than ~1B parameters may run out of memory on first generation Gaudi HPUs which have only 32GB of HBM memory. Second generation Gaudi2 HPUs which have 96GB of HBM can fit much larger models fully in memory. However as model sizes keep growing exponentially to 500B parameters and above, memory footprint becomes the main bottleneck in model training. So how do we reduce the memory footprint so we can support data parallel training of large models? To answer that question, we need to know what constitutes the major memory overheads in training large models on hardware for deep learning. So, let us look at this point first.

Where did all my memory go?

The major consumer of memory are “model states”. Model states include the tensors representing the optimizer states, gradients, and parameters. The second consumer of memory is ‘residual states’, which includes activations, temporary buffers, and unusable fragmented memory. There are two key observations to note regarding memory consumption during training:

- Model states often consume the largest amount of memory during training. Data parallel training replicates the entire model states across all data parallel processes. This leads to redundant memory consumption.

- We maintain all the model states required over the entire training process statically, even though not all model states are required all the time during the training.

These observations lead us to consider how we can reduce the redundant memory consumption due to replication of model states and maintain only needed values during different stages of a training iteration.

Why do model states need so much memory? One of the culprits is the optimizer states. Let us look at Adam, a popular optimizer used for DL training. Adam is an extension of the standard stochastic gradient descent algorithm. Adam optimizer adapts the learning rates based on the average first moment of the gradients (the mean) and the average of the second moments of the gradients (the uncentered variance). Hence it needs to store the two optimizer states for each parameter, namely the time averaged momentum and the variance of the gradients to compute the parameter updates. In addition, the memory requirement is exacerbated further when deploying mixed precision training as we discuss next.

The state-of-the-art approach to train large models is via mixed precision (BF16/FP32) training, where parameters and activations are stored as BF16. During mixed-precision training, both the forward and backward propagation are performed using BF16 weights and activations. However, to effectively compute and apply the updates at the end of the backward propagation, the mixed-precision optimizer keeps an FP32 copy of all the optimizer states that includes FP32 copy of the parameters and other optimizer states. Once the optimizer updates are done, BF16 model parameters are updated from this optimizer copy of FP32 parameters.

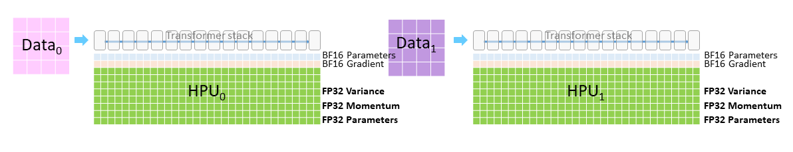

Both Habana Gaudi2 and first-generation Gaudi HPUs support the BF16 data type. As seen in Figure-2 which shows a simple data parallel training set up with 2 data parallel units, we will need to have memory for holding the following:

- BF16 parameters

- BF16 gradients

- FP32 optimizer states which includes FP32 momentum of the gradients, FP32 variance of the gradients and FP32 Parameters

For instance, let us consider a model with N parameters, trained using Adam optimizer with mixed precision training. We will need 2N bytes for BF16 parameters and 2N bytes for BF16 gradients. For the optimizer states, we need 4N bytes for FP32 parameters, 4N bytes for the variance of the gradients and 4N bytes for the momentum of the gradients. Hence optimizer states require a total of 12N bytes of memory. While our example optimizer was Adam, different optimizers would have different degrees of extra memory states needed. Hence to generalize the memory requirements associated with optimizer states, we denote it as KN where N is the number of model parameters and K is an optimizer specific constant. For Adam optimizer, K is 12.

Considering a model with 1 billion parameters, optimizer states end up consuming 12 GB, which is huge compared to the 2GB of memory needed for storing BF16 model parameter weights. And recall the fact that in standard Data Parallel Training, each data parallel process needs to keep a copy of the complete model, including model weights, gradients, and optimizer states. This means that we end up having redundant copies of optimizer states at each DP process, with each occupying 12 GB of memory for 1 billion parameters.

Now that we know that optimizer states occupy a huge redundant memory overhead, how do we optimize away this overhead? This is where DeepSpeed’s ZeRO kicks in, which we shall discuss next.

ZeRO Memory Efficient Optimizations

ZeRO stands for Zero Redundancy Optimizer. As we discussed, standard data parallel training results in redundant memory occupancy of model states at each data parallel process. ZeRO eliminates this memory redundancy by partitioning the model states across the data parallel processes. ZeRO consists of multiple stages of memory optimizations depending on the degree of partitioning of model states. ZeRO-1 partitions only the optimizer states. ZeRO-2 partitions both the optimizer states and the gradients. ZeRO-3 partitions all three model states namely optimizer states, gradients, and parameters across the data parallel processes. Since Habana’s SynapseAI v1.6 supports DeepSpeed ZeRO-2 stage, it enables partitioning of both optimizer states and gradients.

ZeRO-1 stage is all about partitioning the optimizer states alone across the data parallel processes. Given a DP training set up of degree Nd, we group the optimizer states into Nd equal partitions such that the ith data parallel process only updates the optimizer states corresponding to the ith partition. This means that each data parallel process only needs to store and update 1/Nd of the total optimizer states. At the end of each training step, an all-gather across the data parallel processes is performed to get the fully updated parameters across all data parallel processes. Since each data parallel process is concerned with storing and updating 1/Nd of the total optimizer states, it also needs to update only 1/Nd of the total parameters. Hence each data parallel process now has memory requirements for optimizer states cut down to 1/Nd of earlier requirements. If the original memory requirements of standard DP set up is 4N + KN where K is the memory constant associated with optimizer and N is the number of model parameters, ZeRO stage-1 reduces the memory requirements to 2N + 2N + (KN)/Nd. For a sufficiently high degree of Nd, this can be approximated to 4N. Considering a model with 1 billion parameters and with Adam optimizer where K is 12, standard DP consumes 16GB of memory for model states whereas with ZeRO stage-1, this reduces to 4GB, resulting in a reduction of memory overheads by 4X.

ZeRO-2 stage is all about partitioning both the optimizer states and gradients across the data parallel processes. Recall the fact that each data parallel process updates only the parameters corresponding to each partition. This means that each data parallel process needs only the gradients needed corresponding to that partition and not all the gradients. Hence as gradients of different layers become available during back-propagation, we reduce these gradients only on the data parallel process corresponding to the relevant parameter partition. In standard DP, this would have been an all-reduce operation, now it becomes a reduced operation per data parallel process. After the reduction operation is done, the gradients are no longer needed and hence the memory can be released. This reduces the memory required for gradients from 2N in standard DP to 2N/Nd with ZeRO stage-2.

By applying just optimizer state partition, we had the memory requirements of training a N parameter model to 2N (parameters) + 2N (gradients) + KN/ Nd (optimizer states). Now with both optimizer and gradients partitioning in ZeRO stage-2, this reduces to 2N + 2N/Nd + KN/Nd. For Adam optimizer, this becomes 2N + 14N/Nd. For sufficiently large Nd, this approximates to 2N. For training a model with 1 billion parameters with Adam optimizer, standard DP requires 16GB for model states whereas with ZeRO stage-2, it becomes 2GB, resulting in a memory reduction by 8X.

DeepSpeed ZeRO-2 Availability on Habana SynapseAI Software Release 1.6

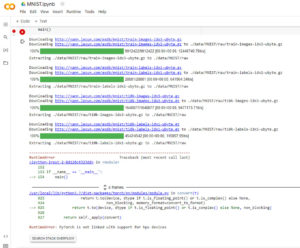

Support for memory efficient optimizations enables effective training of large models on the Habana Gaudi platform. DeepSpeed ZeRO-2 is available with the 1.6 release of Habana SynapseAI Software toolkit. You will need to use Habana’s fork of the DeepSpeed library that includes changes to add support for Habana SynapseAI and Gaudi. Check out Habana’s DeepSpeed Usage Guide for more information. On HabanaAI GitHub, we have published examples of training 1.5B and 5B parameter BERT models on Gaudi using DeepSpeed ZeRO-1 and ZeRO-2.

Happy & Speedy Model Training with Habana Gaudi, SynapseAI and DeepSpeed!

References

- https://docs.habana.ai/en/v1.6.0/Release_Notes/GAUDI_Release_Notes.html

- https://www.deepspeed.ai/

- https://www.microsoft.com/en-us/research/blog/zero-deepspeed-new-system-optimizations-enable-training-models-with-over-100-billion-parameters/

- https://www.microsoft.com/en-us/research/blog/zero-2-deepspeed-shattering-barriers-of-deep-learning-speed-scale/

- ZeRO: Memory Optimizations Toward Training Trillion Parameter Models. Samyam Rajbhandari, Jeff Rasley, Olatunji Ruwase and Yuxiong He.

- DeepSpeed Usage Guide

- Using DeepSpeed and Megatron to Train Megatron-Turing NLG 530B, the World’s Largest and Most Powerful Generative Language Model